Understanding long-form text such as instructions, stories, and dialogues, requires reasoning about implicit causal effects of events depicted in the text. For example, given an instruction such as ``bake the muffins for an hour,’’ an intelligent agent must be able to anticipate a number of entailed facts (e.g., the muffins are now in the oven; their temperature will increase). While this common sense reasoning is trivial for humans, most natural language understanding algorithms cannot reason about causal effects not mentioned in surface strings of the text being read.

We’ve developed approaches that aim to model aspects of world state changes, particularly those related to how entities change as they interact with the world. This world-centric modeling of procedural language (i.e., understanding by simulation) abstracts away from the surface strings, complementing text-centric modeling of language, which focuses on syntactic and semantic labeling of surface words (i.e., understanding by labeling).

Associated Publications

Lianhui Qin, Vered Shwartz, Peter West, Chandra Bhagavatula, Jena D. Hwang, Ronan Le Bras, Antoine Bosselut, Yejin Choi (2020). Back to the Future: Unsupervised Backprop-based Decoding for Counterfactual and Abductive Commonsense Reasoning. Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP).

Aida Amini, Antoine Bosselut, Bhavana Dalvi Mishra, Yejin Choi, Hannaneh Hajishirzi (2020). Procedural Reading Comprehension with Attribute-Aware Context Flow. Proceedings of the 2nd Conference on Automated Knowledge Base Construction (AKBC). Best Paper Runner-up.

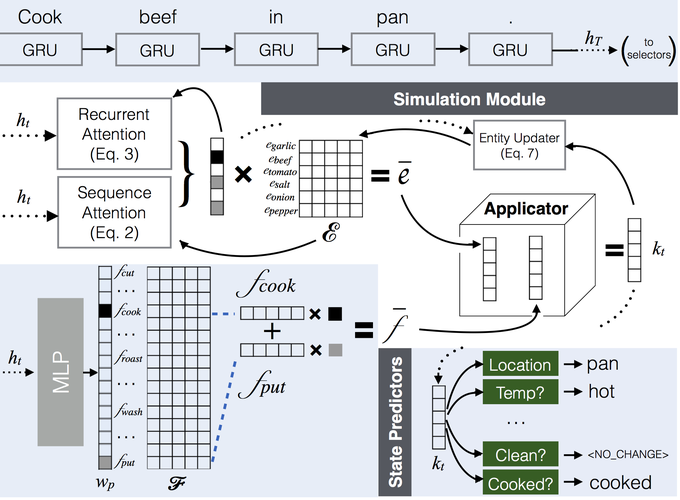

Antoine Bosselut, Omer Levy, Ari Holtzman, Corin Ennis, Dieter Fox, Yejin Choi (2018). Simulating Action Dynamics with Neural Process Networks. Proceedings of the 6th International Conference for Learning Representations (ICLR).

Bhavana Dalvi Mishra, Niket Tandon, Antoine Bosselut, Wen-tau Yih, Peter Clark (2019). Everything Happens for a Reason: Discovering the Purpose of Actions in Procedural Text. Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP).

Niket Tandon, Bhavana Dalvi Mishra, Keisuke Sakaguchi, Antoine Bosselut, Peter Clark (2019). WIQA: A dataset for “What if…” reasoning over procedural text. Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP).

Xinya Du, Bhavana Dalvi Mishra, Niket Tandon, Antoine Bosselut, Wen-tau Yih, Peter Clark, Claire Cardie (2019). Be Consistent! Improving Procedural Text Comprehension using Label Consistency. Proceedings of the 17th Annual Meeting of the North American Association for Computational Linguistics (NAACL).

Hannah Rashkin, Antoine Bosselut, Maarten Sap, Kevin Knight, Yejin Choi (2018). Modeling Naive Psychology of Characters in Simple Commonsense Stories. Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (ACL).

Niket Tandon, Bhavana Dalvi Mishra, Joel Grus, Wen-tau Yih, Antoine Bosselut, Peter Clark (2018). Reasoning about Actions and State Changes by Injecting Commonsense Knowledge. Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP).